Most media content about AI today reads like supermarket tabloids—sensational, shallow, and often misleading. These pieces can confuse more than they clarify. The reasons are numerous: clickbait incentives, genuine ignorance among writers, and biased enthusiasm from those selling AI products.

Most authors of such articles have never taken a foundational computer science course, written code, or run AI software.

Here are twelve tips for detecting fake and misleading articles about artificial intelligence:

When AI headlines sound apocalyptic or miraculous, it’s time to slow down. Sensational claims are often inflated to grab attention, sometimes by writers who genuinely believe their own forecasts or lay out clickbait.

History shows that dramatic predictions routinely miss the mark, making skepticism and evidence essential companions to examining technological wonders.

Writers frequently use qualifying language to avoid being wrong while suggesting dramatic advances. Words like “developing” and “expected” imply progress that hasn’t occurred but exists only in the dreams of those reporting.

Watch for imprecise wording that indicates technology is not yet developed.

Some forecasters gain attention with distant, dramatic prophecies that can’t be checked until we’re all dead or nobody cares anymore. Dystopian forecasts set far in the future often fall into this category.

Appeals to “scientific consensus” are frequently used to shut down debate, but consensus does not determine truth—evidence does. Many major historical breakthroughs defied prevailing opinion, and past consensus predictions have often failed. In fast-moving fields like AI, claims based on agreement rather than data deserve skepticism.

Materialism—the belief that all reality is physical—can limit scientific inquiry by rejecting non-material explanations. This worldview dominates fields like science and AI, often suppressing alternative perspectives. In the field of AI, materialists cannot escape the assumption that we are all computers made from meat. True understanding requires following evidence wherever it leads, without allowing any ideology to control, distort, or restrict the search for truth.

Seductive semantics uses emotionally charged language to make ideas seem more meaningful than they are. In AI, terms like “self-aware,” “hallucinations,” and “human intelligence” can mislead by implying human-like traits in machines. Clear thinking requires precise definitions, not slippery words that invite misleading anthropomorphizing.

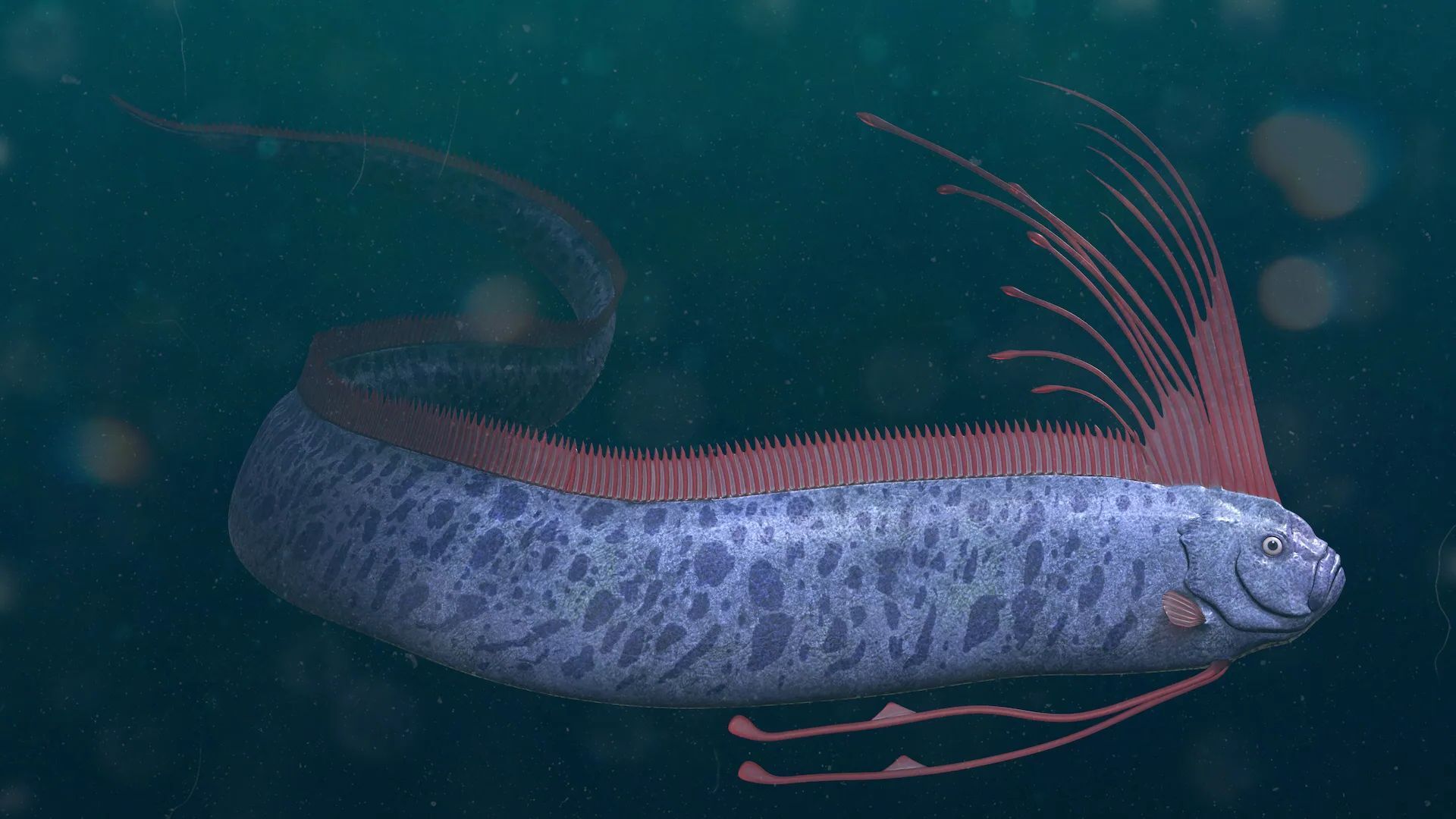

Seductive optics use impressive visuals—like lifelike walking robots or expressive faces—to make AI seem more advanced or human than it is. These visual cues exploit our tendency to personify human-like objects and react emotionally, even to simple facial features. In psychology, the “Frankenstein Complex” and “Uncanny Valley” amplify both fascination and fear of things that look human. Robots can be made to look human, yet a robot’s face tells us nothing about underlying technology. Marketers often use this to oversell capabilities of machines with any resemblance to humans.

To avoid being misled, it is critical to separate the flashy packaging from actual technology underneath.

Seemingly true claims may be technically accurate but framed to mislead, often by exaggerating or omitting key details. These are half-truths: in AI, headlines announce breakthroughs that the actual technology does not deliver. Stories rely on dramatic headlines while burying disclaimers deep within the article.

A critical reader must look past hype and examine full context to avoid being misled by claims that sound amazing but don’t match reality.

Citation bluffing cites impressive-sounding sources to support misleading claims. In AI reporting, headlines may suggest breakthroughs like solving open problems in mathematics while actual AI contributions are far more limited. Readers should question whether cited sources truly say what’s claimed.

Small-silo ignorance occurs when experts speak confidently outside their field without proper understanding. Fame or brilliance in one area does not equal expertise in another—especially in complex topics like AI. Even scientists can make bold misleading claims in areas outside their silo of expertise.

This filter reminds readers to evaluate ideas based on evidence, not just the speaker’s reputation or credentials. Not all sources are equally reliable; this filter warns readers to assess credibility, accuracy, and motives behind what they read. Conflicts of interest can fuel AI hype when researchers, journalists, or institutions benefit from dramatic claims—whether for funding, attention, or prestige. Even respected academics may overstate results to impress peers or funding sources. Heads of large AI companies like Grok and OpenAI will spin news to align with the company’s bottom line. This filter reminds us to ask: who gains from this message?

These filters are not meant to reject progress or innovation, but to separate genuine AI advances from exaggerated claims and ideological noise.

Robert J. Marks Ph.D. is distinguished professor at Baylor University and senior fellow and director of the Bradley Center for Natural & Artificial Intelligence. He is author of Non-Computable You: What You Do That Artificial Intelligence Never Will Never Do and Neural Smithing.